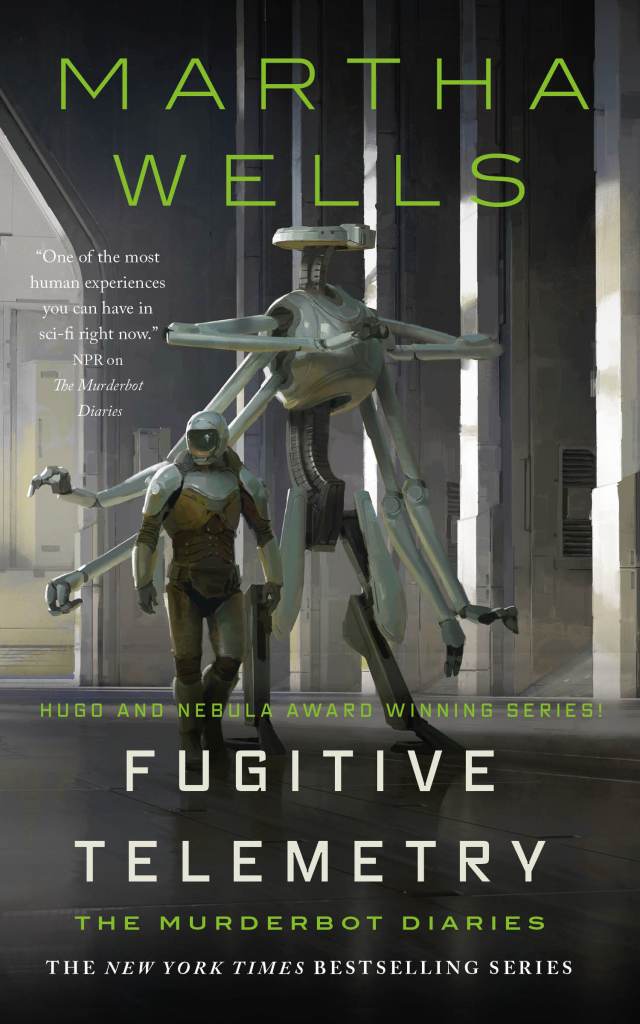

KathyReid@bookwyrm.social rated Fugitive Telemetry: 4 stars

Fugitive Telemetry by Martha Wells

No, I didn't kill the dead human. If I had, I wouldn't dump the body in the station mall.

When …

technology. cybernetics. systems. science fiction. languages. machine learning. speech recognition.

This link opens in a pop-up window

No, I didn't kill the dead human. If I had, I wouldn't dump the body in the station mall.

When …

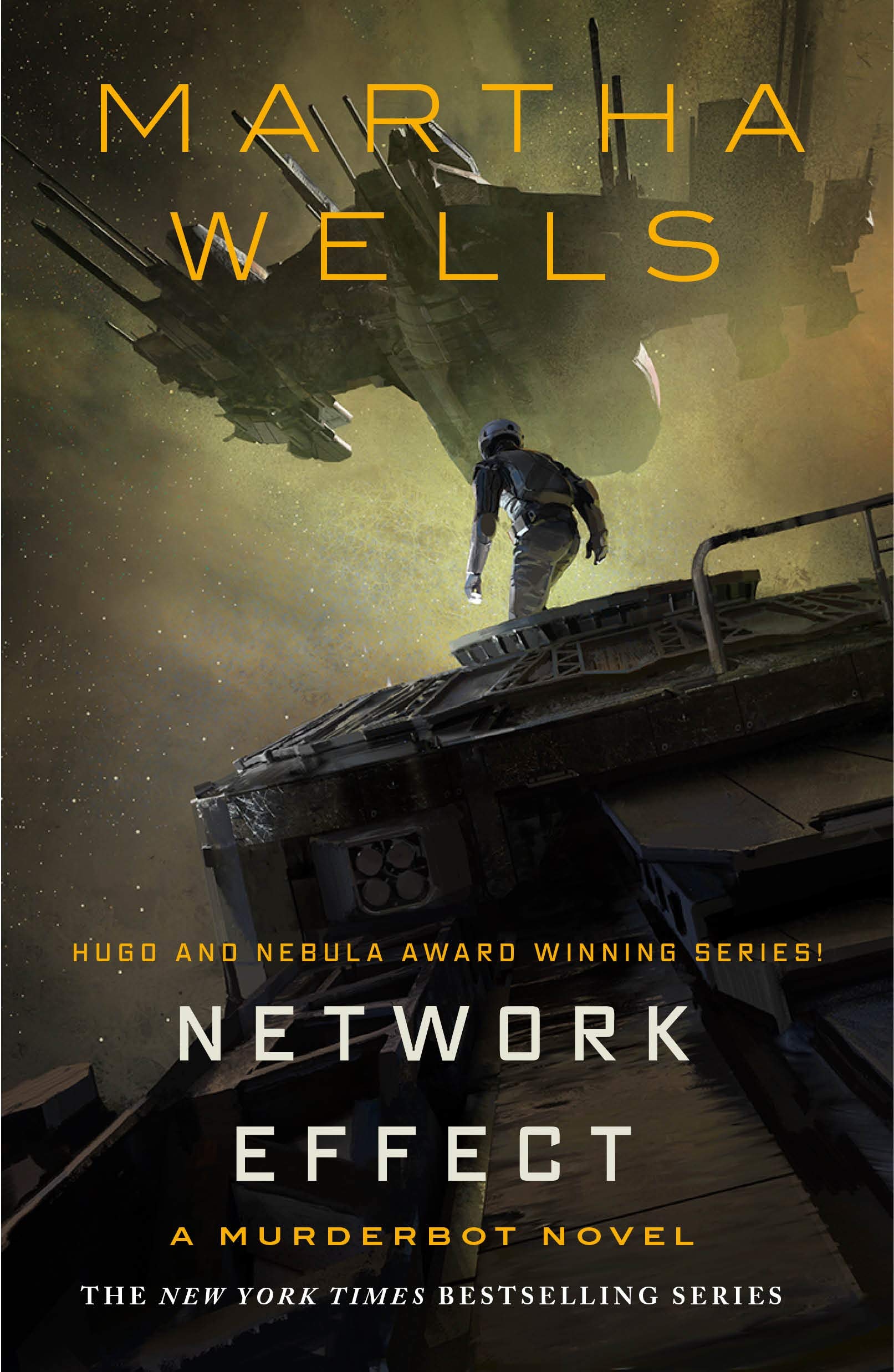

WINNER of the 2021 Hugo, Nebula and Locus Awards!

The first full-length novel in Martha Wells' New York Times and …

WINNER of the 2021 Hugo, Nebula and Locus Awards!

The first full-length novel in Martha Wells' New York Times and …

"As a heartless killing machine, I was a complete failure."

In a corporate-dominated spacefaring future, planetary missions must be approved …

SciFi’s favorite antisocial A.I. is again on a mission. The case against the too-big-to-fail GrayCris Corporation is floundering, and more …

It has a dark past—one in which a number of humans were killed. A past that caused it to christen …

No, I didn't kill the dead human. If I had, I wouldn't dump the body in the station mall.

When …

It has a dark past - one in which a number of humans were killed. A past that caused it …

All Systems Red is a 2017 science fiction novella by American author Martha Wells. The first in a series called …

"A groundbreaking approach to transforming traumatic legacies passed down in families over generations, by an acclaimed expert in the field …

In this cleverly constructed series of case studies, Virginia Eubanks takes a critical eye to the automation of social welfare systems in three separate contexts in the United States. Through rich, qualitative interviews, she continuously advances her key argument: that by automating our social welfare systems - housing, welfare, social supports - we are manifesting the poorhouse - and its affordances - for the age of big data.

Her work is mature ethnography: she forms close, trusted bonds with actors from all parts of the welfare systems she investigates, providing a nuanced, multi-faceted exploration of how the rationalisation and automation of welfare systems embodies and perpetuates fundamentally flawed axiology. In a conclusion that Donna Meadows would be proud of, she entreaties us to upend the system through solidarity, collective action and the recognition that poverty - and its automation - is a choice. We should choose better.

In this cleverly constructed series of case studies, Virginia Eubanks takes a critical eye to the automation of social welfare systems in three separate contexts in the United States. Through rich, qualitative interviews, she continuously advances her key argument: that by automating our social welfare systems - housing, welfare, social supports - we are manifesting the poorhouse - and its affordances - for the age of big data.

Her work is mature ethnography: she forms close, trusted bonds with actors from all parts of the welfare systems she investigates, providing a nuanced, multi-faceted exploration of how the rationalisation and automation of welfare systems embodies and perpetuates fundamentally flawed axiology. In a conclusion that Donna Meadows would be proud of, she entreaties us to upend the system through solidarity, collective action and the recognition that poverty - and its automation - is a choice. We should choose better.

From Daniel H. Pink, the author of the groundbreaking bestseller A Whole New Mind, comes his next big idea book: …

From Daniel H. Pink, the author of the groundbreaking bestseller A Whole New Mind, comes his next big idea book: …